Blog

Spring Clean Your Finances

Personal loans can be a game-changer in your spring plans. Whether you have a project in mind or want to consolidate your high-interest credit card bills into one lower, fixed-rate payment, it’s a great time to lock in your rate, and start saving today! How […]

View moreNavigating the Volatile Housing Market

Should You Buy or Sell Now? The housing market is always changing, and in recent years, it has become more unpredictable. This has left many potential buyers and sellers wondering whether it is the right time to move. Factors Contributing to Housing Market Volatility Several […]

View moreHow life events can change your housing needs.

Are you contemplating buying a home this year? Taking such a significant leap forward is a crucial milestone. Life-changing events such as marriage, divorce, job opportunities, or just the desire for a better lifestyle often spur this decision. We’re aware that today’s economic climate and […]

View moreWhy it’s a good time to earn more with a 6-Month Certificate

Trying to maximize your savings? That’s smart! But let’s face it, finding the right place to invest that hard-earned cash can be a bit tricky. Why? Well, the financial world can be like a roller coaster – unpredictable and rapidly changing. But don’t worry, we’re […]

View more2024 TEG Annual Meeting

YOU are the reason we love what we do—improving lives. Helping people get to a better place financially. Member Focused. Member Driven. Together Everyone Grows. Over the past five decades, TEG has grown to serve over 37,000 members and is approaching $420 million in assets. […]

View moreWhy the Holidays are a Good Time to Consolidate Your Debts

During the holiday season, you’re likely making a list and checking it twice. But between gifts, travel, and festive celebrations, it’s easy to lose sight of holiday spending and your financial goals. This year, why not add “consolidate my debts” to your wish list? The […]

View moreThanksgiving Dinner Tips

10 Easy Suggestions for a Stress-Free Thanksgiving Dinner Hosting a stress-free Thanksgiving meal can be emotionally overwhelming and break your budget, but it doesn’t have to. Follow these tips for Thanksgiving dinner to enjoy a calm and budget-friendly holiday celebration. Plan Ahead Don’t wait until […]

View moreFree Community Shred Events

In a High Mortgage Rate Environment – What Are Your Options to Buy a Home?

Buying a home is one of the most significant investments you’ll make. Low mortgage rates have made it easier for people to afford their dream homes over the last dozen years. In 2023, home mortgage rates have been soaring, causing problems for people who want […]

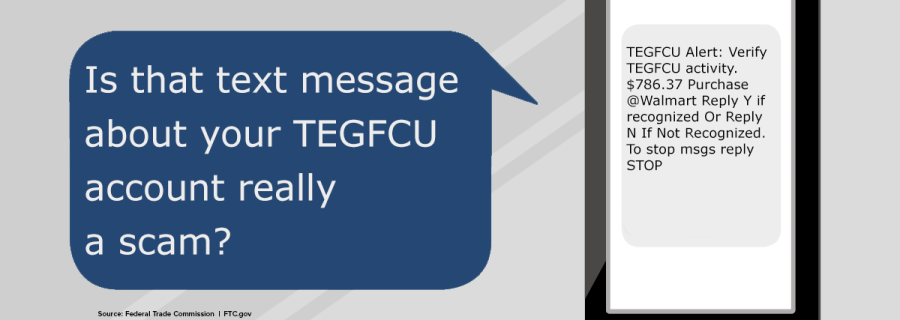

View moreBeware of Text Message Scams

The Federal Trade Commission (FTC) is warning consumers about text message scams. The FTC found that fake bank fraud alerts were the most common text message scam reported in their study. The study also revealed that many popular financial text scams pretend to be from […]

View moreDon’t Be Tricked By Social Engineering Scams

Why Should I Shred My Documents?

Know the Signs of a Scam

How Do I Know If I’m Talking to a Scammer? Know the signs of a scam. The internet has made communicating with others much easier, but it also makes it easier for scammers to find new victims. Scammers are constantly looking for people to take […]

View moreScam Alert – Members Beware – Telephone and Text Scams

Please be aware of a phishing telephone scam that some credit unions across the country are reporting more frequently. Members are receiving phone calls from fraudsters posing as a credit union employee, who tell them that their account has been compromised or that their credit/debit […]

View moreWhat is an Adjustable-Rate Mortgage?

What do I need to know about Adjustable-Rate Mortgages? An adjustable-rate mortgage, or ARM, is a home loan with an interest rate that can change periodically. This means that your monthly mortgage payment could go up or down over the life of your loan. ARMs […]

View moreBeware of Online Quiz Scams

Home Warranty Scams

Home warranty scams are rising. with millions of dollars lost to fraudsters each year. These scams can take many forms, but they all share one common goal: stealing your money. Scammers often pretend to be from companies you know and trust. For example, TEG Federal […]

View moreHow to Use Zelle to Safely Send Money

Postal Mail Theft

If you live in Hudson Valley, it’s time to get on the defensive regarding mail theft. With rising reports of statements, packages, letters, and other important documents going unchecked due to a significant increase in mail thefts across the state, this is not something NY […]

View moreTEGFCU eStatements

Fast. Secure. Convenient. Always Available. Your bank statement is a vital tool for keeping track of your withdrawals and deposits, but more importantly, it also helps you become aware of suspicious activity and possible fraud. Fast – Avoid USPS postal shortcomings, delays, and lost or […]

View moreAnnual Meeting 2023

This year’s 54th Annual Meeting was held: March 30, 2023, 5:30 PM 1 Commerce Street, Poughkeepsie, NY, 12603 Each year, TEG Federal Credit Union hosts an Annual Meeting. Our Annual Meeting is a unique opportunity for credit union members to hear about our financial health […]

View moreTEG 2023 Scholarships

Student Scholarships Available We’re ready to support the future generations of our communities. Our scholarship program is an investment in our future and yours. With a solid education, we can all make a difference. TEG Federal Credit Union will award three $1,000.00 scholarships to graduating […]

View moreKeep Yourself Safe from Scams and Fraud

Renovation Loan

The national trend of homebuyers seeking out properties in need of renovations has established a firm foothold in the Hudson Valley, where low inventory and a critically-low inventory of turnkey houses, have repositioned the market. As a result of these shifting sands, many homebuyers are […]

View more5 C’s of Credit: Capacity

There comes a time when everyone needs to start prioritizing their credit score. Whether you’re applying for a credit card or a loan, your credit score impacts your ability to access these products. There are five major factors to consider, often referred to as the […]

View moreDebt Consolidation Loan

Auto Lease Buyout: Is it Right for You?

It all starts with an auto lease buyout. Simply put, this involves you buying your car when your lease ends, instead of turning it in, beginning a new lease and getting a different car. A car lease buyout is essentially a used car loan – only this time, you’ve already been driving the vehicle for the past few years.

View moreCredit Builder Loans: Personal Loans to Build Credit

Having a good credit score is critical in the age we live in. Having a low score—or no credit history—can hold you back in life. The good news is that you don’t have to live with a low credit score forever. You can use a […]

View moreThe Conventional Loan vs. FHA Loans

Home buyers have several financing options to choose from, and two of the most common are conventional loans and FHA loans. If you’re thinking about buying a home, it’s important to understand the differences between the two loans so you can choose the best mortgage […]

View moreThe Pros and Cons of Using a Personal Loan to Pay Off Credit Card Debt

Buying things with credit cards is quick and easy—just swipe your card and go. But for many, the ease of making purchases causes their balances to grow to unmanageable levels. If you have one or more high-interest credit cards, a personal loan can be used […]

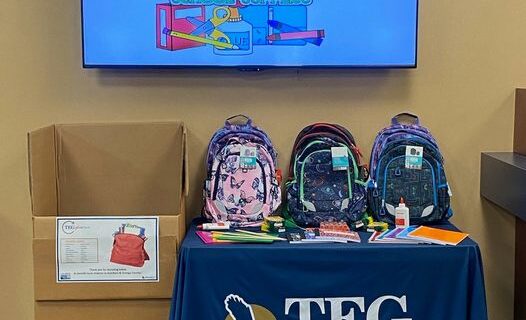

View moreBack-to-School Drive

It’s almost time for kids to head back to school. Let’s help ensure that every student has the basic supplies they need to succeed! At TEG FCU, we feel it is both an honor and privilege to give back to the communities we serve. After […]

View moreWhat is APR and How Does it Affect Your Mortgage?

The annual percentage rate (APR) is a term you’re likely to encounter while reviewing your mortgage options. Thankfully, it’s not difficult to understand—but what is APR exactly? Knowing how the APR works can help compare different loans to help you get the best deal possible. […]

View moreRefinancing a HELOC or Home Equity Loan

If you have either a home equity line of credit (HELOC) or a home equity loan, you may have considered refinancing to get more favorable terms or to save money on interest. Before you refinance, however, there are some important things to consider to make […]

View moreHELOC & Home Equity Loan Tax Deductions

There are many perks to being a homeowner. When you buy a home, for example, the down payment and monthly mortgage payments you make help to grow your equity. Many people take advantage of their home equity by taking out either a home equity line […]

View morePersonal Loan FAQs

Personal loans are among the most common financing options for borrowers. They are highly flexible, relatively easy to obtain, and the interest rates are often lower than other borrowing options, such as credit cards. If you are considering one of these loans, the following are […]

View moreWhat Is a Debt-to-Income Ratio?

If you are thinking about applying for a loan, you may have encountered the term debt-to-income (DTI) ratio while researching your options. When considering applicants for a loan, lenders evaluate this ratio to make sure borrowers don’t have too much debt. Understanding the DTI ratio […]

View morePreparing Your Finances for Interest Rate Hikes

Although interest rates have been very low for a while, they are now rising. To cool the high level of inflation we are experiencing, the Federal Reserve is raising interest rates for the first time since 2018. During the uncertain economic times we are now […]

View moreRising Home Prices and Your Home’s Equity

In just the past two years, home prices all across the nation have increased significantly. If you’ve been wondering what’s going on, the following overview can help you understand why prices are rising and how they affect your home’s equity. Why Are Home Prices Rising […]

View moreHELOC vs. Home Equity Loan When Interest Rates Rise

Many people tap into their home’s equity with either a home equity loan or a home equity line of credit (HELOC). They may use the money they borrow for a home improvement project, to buy new appliances, or for something else. A question that borrowers […]

View moreHow Do Home Equity Loans Work?

A common way that many homeowners borrow money is with a home equity loan. The money you borrow can be used for many different purposes, and the interest rates are usually lower than other borrowing options, like personal loans. The following overview can help you […]

View moreUnderstanding Mortgage Closing Costs

Closing costs are important fees that you should know about if you are considering applying for a mortgage. These fees vary depending on the lender and are usually 2% to 6% of the amount you are borrowing. By understanding how closing costs work and which […]

View moreGuide to Home Equity Lines of Credit (HELOCs)

If you’ve built up some equity in your home, you might be thinking about tapping into the cash to fund renovations and anything else you have on your plate. You might also be wondering how a home equity line of credit works. A home equity […]

View moreSign up for eStatements & be entered to win a $100 Adam’s Gift Card

Sign up for e-Statements and get entered to win one of three $100 Adams Fairacre Farms Gift Cards! We at TEG Federal Credit Union don’t want you to get e-Statements because it’s green. Saving trees and time is a great reason for you to want […]

View moreRising Interest Rates Make Personal Loans a Smart Choice

You’ve probably heard it on the news – the Federal Reserve will raise interest rates this year to help slow inflation. According to many economists, we could see up to six or seven rate hikes in 2022. But what does this mean for you? Will […]

View moreBuying a Fixer-Upper in Hudson Valley, NY

Visiting the Hudson Valley should come with a warning – once you’ve experienced it, you will probably fall in love with it. Whether it’s the spectacular fall foliage, outdoor recreational adventures, breathtaking vistas, historic locations, or something else, each year many people are taken by […]

View moreHow to Get the Best Mortgage Rate in Hudson Valley, NY

It’s not hard to understand why so many want to move to Hudson Valley, NY. The area is blessed with incredible natural beauty, and it has a rich history and culture. A foodie paradise, it has interesting restaurants and endless farmer’s markets to explore. It’s […]

View morePayday Loans vs. Personal Loans [What You Need to Know]

When many find themselves in financial binds and need quick cash, they often turn to payday loans. These loans are quick and easy to obtain, and the funds are usually available the same day you apply. Although payday loans are convenient, they have some important […]

View more